Througput Performance between SLAC and CERN

Les Cottrell.

Page created: May 2, 2000, last update: June 24, 2000.

Central Computer Access | Computer Networking | Network Group | ICFA-NTF Monitoring

|

|

Througput Performance between SLAC and CERN

Les Cottrell.

Page created: May 2, 2000, last update: June 24, 2000.

|

|

FERMI -> CERN : MAX 30.1 Mbps CERN->FERMI : MAX 30.1 Mbps SLAC->CERN : MAX 15 Mbps CERN->SLAC : MAX 26 Mbps FERMI->SLAC : MAX 29.6 Mbps SLAC->FERMI : MAX 24.2 MbpsThus it appears we can get less bandwith from SLAC to anywhere than from anywhere to SLAC.

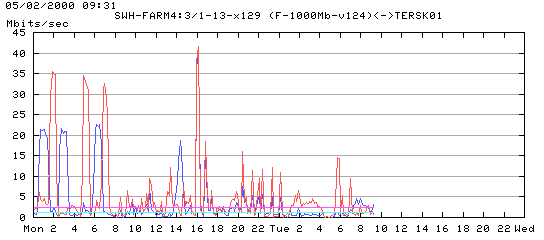

Further tests were made May 2nd, 2000, this time Gilles waited for the trafic getting out SLAC or coming into SLAC to be very low. A third set of tests started Monday 1st May at 0:25 (US SLAC time). The plot below shows that the trafic was at this time :

The measurement results (15 transfers of one file of 1467582976 bytes for SLAC-CERN and 17 transfers of one file of 1467582976 bytes for CERN-SLAC) showed:

4cottrell@flora01:~>sudo /afs/slac/g/scs/bin/pathchar cernh9-s5-0.cern.ch

Password:

pathchar to cernh9-s5-0.cern.ch (192.65.184.142)

mtu limitted to 1500 bytes at FLORA01.SLAC.Stanford.EDU (134.79.16.29)

doing 32 probes at each of 64 to 1500 by 44

0 FLORA01.SLAC.Stanford.EDU (134.79.16.29)

| 30 Mb/s, 197 us (797 us)

1 RTR-CORE1.SLAC.Stanford.EDU (134.79.19.2)

| 54 Mb/s, 193 us (1.41 ms)

2 RTR-CGB6.SLAC.Stanford.EDU (134.79.135.6)

| 112 Mb/s, 65 us (1.64 ms)

3 RTR-DMZ.SLAC.Stanford.EDU (134.79.111.4)

| 106 Mb/s, -43 us (1.67 ms)

4 ESNET-A-GATEWAY.SLAC.Stanford.EDU (192.68.191.18)

| 30 Mb/s, 27.9 ms (57.8 ms)

5 chicago1-atms.es.net (134.55.24.17)

| 28 Mb/s, 543 us (59.4 ms)

6 206.220.243.32 (206.220.243.32)

-> 206.220.243.32 (2)

| 29 Mb/s, 56.3 ms (172 ms), 1% dropped

7?cernh9-s5-0.cern.ch (192.65.184.142)

7 hops, rtt 170 ms (172 ms), bottleneck 28 Mb/s, pipe 593027 bytes

ccdevsn1:csh[10] pathchar tersk01.slac.stanford.edu

pathchar to tersk01.slac.stanford.edu (134.79.125.21)

doing 32 probes at each of 64 to 1500 by 32

0 localhost

| 31 Mb/s, 284 us (0.96 ms)

1 Lyon-ANDA.in2p3.fr (134.158.104.100)

| 84 Mb/s, 120 us (1.34 ms)

2 Lyon-TIF.in2p3.fr (134.158.240.6)

-> 192.70.69.141 (1404)

-> 192.70.69.13 (1423)

| 1.5 Mb/s, 3.19 ms (15.6 ms)

3?Cern1.in2p3.fr (192.70.69.10)

| 19 Mb/s, 653 us (17.6 ms)

4 cernh9.cern.ch (192.65.185.9)

| 31 Mb/s, 55.9 ms (130 ms)

5 ar1-chicago.cern.ch (192.65.184.141)

| 27 Mb/s, 664 us (132 ms), +q 1.04 ms (3.56 KB) *2

6 chicago-nap.es.net (206.220.243.85)

| 30 Mb/s, 27.9 ms (188 ms), +q 1.02 ms (3.80 KB) *2

7 slac1-atms.es.net (134.55.24.13)

| 73 Mb/s, 210 us (188 ms), +q 1.03 ms (9.34 KB)

8 RTR-DMZ.SLAC.Stanford.EDU (192.68.191.17)

Looking at the

Surveyor one way

measurements for April 17th between SLAC and CERN the effect of the

file transfer on the one-way delays is apparent (note the large increases

between 20:00 and 22:00 UTC, i.e. when Gilles was making his tests).

It may be significant that the impact

on the delays from CERN to SLAC are larger (increase from 80msec. to 220msec.)

than from SLAC to CERN (increase from 80msec. to 110msec.) During this time the

routes appeared to be stable, also no packet loss was reported by

Surveyor.

However, the bbftp thruput was still measured at about 16Mbps with 10 streams, from tersk01.slac.stanford.edu to sunstats.cern.ch (the iperf thruput from tersk01 to sunstats was measured at ~25Mbits/sec).

From datamove3 : - 2 threads No Comp 7.5 Mb/s - 4 threads No Comp 12.4 Mb/s - 5 threads No Comp 13.6 Mb/s - 6 threads No Comp 19.1 Mb/s - 8 threads No Comp 20.1 Mb/s - 10 threads No Comp 19.9 Mb/s - 5 threads with Comp 13.6 Mb/s - 8 threads with Comp 6.3 Mb/s - 10 threads with Comp 7.4 Mb/s From tersk02 : - 10 threads with Comp 26.4 Mb/s - Second try : 25.1 Mb/s From shire01 : - 10 threads with Comp 37.9 Mb/sSo it appears that the network is fine, the throughput is limited by the datamove3 machine itself when we use compression. Dominique saw the load on datamove3 increasing from ~5 up to ~17 when I was trying to use 10 threads with compression.

Randy Melen reports 6/13/00:

I've watched datamove3 today and see 8 bbrftp processes

running, though sleeping. I haven't seen the "load" go above 1.3, and typically

it is much loiwer. I see CPU usage typically less than 10%. I conclude that

nothing us being done on this system whenever I watch it! So I need to know

when these transfers are being done. When datamove3 was purchased, it was

expected to do the normal "datamove-like" work done and so only 1GB of memory

was allocated. Maybe that's not enough now for this different kind of use.

Also it has 4 CPUs at 336MHz, relatively modest today, but sufficient probably

for "datamove-like" work -- but not enough if you're compressing multi-gigabytes

of data and shoving it out a Gb Ethernet interface that also soaks up CPU

capacity with a high interrupt level.

Two tests that would be interesting would be:

To ascertain the possible impact of the high performance testing on interactive applications that require low, consistent RTT and low loss, we ran IPERF from SLAC (oceanus.slac.stanford.edu) and CERN (sunstats.cern.ch) and simultaneously measured the ping RTT and loss from both ends. The results shown below, apparently indicate that we could see litle difference on the RTT and loss whether or not we were running IPERF:

Min Avg Max Loss CERN > SLAC without IPERF 189 251 435 0/380 SLAC > CERN without IPERF 181 221 424 0/100 CERN > SLAC with IPERF 180 288 405 2/604 SLAC > CERN with IPERF 182 289 397 4/607The IPERF was with 15 streams and windows of 256Kbytes and ran for 10 minutes. The test was started at 10:29am Saturday 6/24/00. Running IPERF we were able to get about 9.4Mbps thruput.

Looking at the Router utilization graph for the SLAC ESnet line

(see below),

it appears something else was making heavy continuous use of the link for

many hours (since the outbound utilization from SLAC was over 70% for

at least 24 hours before (10:00am PDT) our test was made and through our test.

Further investigation revealed that Dominique Boutigny was running bbfftp between SLAC

and IN2P3 at this time. He is using bbftp in production now to transfer

data to IN2P3 in Lyon, and in the week from 6/19/00 thru 6/25/00 he

successfully transferred between 300 and 350 GBytes.

Note that IN2P3 is accessed via CERN.

The SLAC ESnet link is also being heavily used to transfer Monte Carlo

data from INFN/Rome, IN2P3 and Colorado to SLAC, and for LLNL to write

directly into the Objectivity federation. In addition Caltech will

soon be starting to use the link heavily.

Since somebody was making high performance tests when

we made the ping measurements without IPERF running we need to look at the

longer term PingER RTTs and losses and see if there is a correlation

with the utilization.

The PingER RTT (red) and Loss (blue) results

(one point per half hour interval) for SLAC to CERN for

Monday 19th June thru Sunday 25th June are shown below.

It can be seen that there is a noticeable impact on RTT, however the loss

rates appear to be minimally impacted by the utilization.