LCLS BEAM SYNCHRONOUS DATASTORE USER GUIDE

Greg White, Michael Davidsaver, SLAC, October 2018.

Revision 1.1, 19-Dec-2018, Added System Management.

Doug Murray, SLAC, May 2022.

Revision 1.2, 04-May-2022, Updated to reflect system changes.

This document helps people wishing to do offline "big data" analysis of the Linac Coherent Light Source (LCLS) accelerator systems, to access and interpret data files of pulse-synchronous values of diagnostic device measurements of the accelerator, such as Beam Position Monitor data.

The data files described here, capture all of the data from all measurement diagnostic devices in LCLS, for every pulse. That is, all the pulse data, all the time.

TABLE OF CONTENTS

This section describes the pulse synchronous data files of LCLS, for physicists and others wishing to get the files and analyse the data.

Primarily, the data is composed of Beam Position Monitor data (BPM), Klystron data, 300 other assorted pulse synchronous signals, plus some associated beam data, such as charge on the cathode, measured electron energy, recorded photon energy, etc.

There are two pathnames that provide access to the data, depending on the network or computer being used. These paths reference the same directories, and the same data files exist in both.

GPFS - The SDF facility (the SLAC Shared Scientific Data Facility, or SSSDF, S3DF) and other systems shown in the table below, can access data at:

/gpfs/slac/staas/fs1/g/bsd/BSAService/data/

Systems on the production (active accelerator) networks will not have access.

NFS - Most SLAC interactive computer systems can access data at:

/nfs/slac/g/bsd/BSAService/data/

Systems on the production (active accelerator) networks will not have access.

| Computer or Facility |

Details | GPFS Access |

NFS Access |

Comments |

|---|---|---|---|---|

| rhel6-64 | Linux RedHat Enterprise v6 |

Yes | Yes | SLAC's primary computer cluster for interactive analysis. |

| centos7 | Linux Centos 7 | Yes | Yes | Another computer cluster for interactive analysis. |

| jupyter | Yes | No | SLAC's python web-based notebook facility. The Machine Learning images (as selected from the Spawner) have been tested and can see the GPFS mount point. Avoid the NFS mount point as it has automount issues. Click Here for Access |

|

| SDF | Shared Data Facility |

Yes | No | SLAC's Shared Scientific Data FacilityClick Here for Details |

| LSF | Load Sharing Facility |

Some | No | SLAC's High Performance Computing Facility.Click Here for DetailsFor LSF access, the "bullet" cluster (bsub -R bullet) can access GPFS and other clusters are being added. |

The files are arranged in a directory structure reflecting year, ordinal month, ordinal day of capture. For example, one can find data acquired on December 6, 2020 at:

/gpfs/slac/staas/fs1/g/bsd/BSAService/data/2020/12/06/or

/nfs/slac/g/bsd/BSAService/data/2020/12/06/As mentioned above, these two directory paths access the same files.

NOTE: that for some days prior to deployment of this system, an emergency stop-gap system that periodically wrote out all the data from the BSA buffers, was deployed (thanks to quick work by Mike Zelazny). The data of that prior system is still available at time of writing, at:

/gpfs/slac/staas/fs1/g/bsd/faultbuffer/or/nfs/slac/g/bsd/faultbuffer/.

The following are examples of getting the pulse data files from GPFS or NFS to a MacOS computer (OS X) or other Unix based machine. The tools used are Secure Shell (ssh) and Secure Copy (scp), which are typically included with MacOS X and Linux. Open a terminal window to type the following commands:

To get a remote directory listing:

% ssh -x -l <yourusername> rhel6-64.slac.stanford.edu ls /nfs/slac/g/bsd/BSAService/data/2018/10/22

total 19081952

drwxr-xr-x 2 mad rl 32768 Oct 22 16:35 ./

drwxr-xr-x 6 mad rl 512 Oct 22 17:35 ../

-rw-r--r-- 1 mad rl 840476543 Oct 21 17:35 CU_HXR_20181022_003549.h5

-rw-r--r-- 1 mad rl 845860287 Oct 21 18:35 CU_HXR_20181022_013553.h5

-rw-r--r-- 1 mad rl 841526072 Oct 21 19:35 CU_HXR_20181022_023555.h5

...To copy files one at a time to your own computer, you can use the scp command (optionally with compression, -C). The following is an example of getting 2 data files. Notice there is a dot ('.') after a space at the end of each line, which represents the current directory in which you're working on your own computer:

% scp -C <yourusername>@rhel6-64.slac.stanford.edu:/nfs/slac/g/bsd/BSAService/data/2018/10/22/CU_HXR_20181022_003549.h5 .

% scp -C <youruseramee>@rhel6-64.slac.stanford.edu:/nfs/slac/g/bsd/BSAService/data/2018/10/22/CU_HXR_20181022_013553.h5 .NOTE: The scp command might not work if your login session on the remote computer prints extra messages. To be specific, if you have a .profile, .login, .bashrc or similar startup script file on the remote computer which prints any type of message, the scp command might fail.

The files loaded over eurodam wifi have download speed between 1.2MB/s - 8.5 MB/s, ie 2.5 - 15 minutes per file roughly, depending on wifi load.

One can also get multiple files at time, or whole directories. For such operations, check the scp -r and the rsync commands.

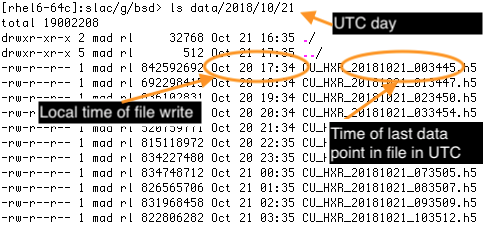

Coordinated Universal Time (UTC) (basically Greenwich Mean Time - GMT) is used throughout the system (or as engineers call it, POSIX time). Consequently:

The day/ directory will be the day as of UTC - 7 hours ahead of Pacific Time (PT). So, when we're in PST, to find the files of the first 7 hours of our local SLAC day, the data files will be in the directory of the next day.

The data files are named with the UTC datestamp of the END of their data taking period

The data in the files, is timestamped in "seconds past epoch" (and nanoseconds past that second), past midnight on January 1st, 1970, UTC. See Data Description

Hence, the data timestamps match the file names match the directory structure.

The files contain data arranged conceptually in tables, acquired in periods of 60 minutes, or containing 1.5 GB, whichever comes first. Typically the files are 60 minutes and 1.2 GigaBytes. It should be that no data pulse is missed between files.

The data within the tables are acquired from EPICS Process Variables (PVs), which provide their data when enabled by the arrival of the beam. Technically speaking, they are enabled by a signal from the accelerator's timing system which also drives the production of the electron beam.

The data files are compatible with Matlab v6, written in HDF5 format (Hierarchical Data Format) and each filename has the suffix '.h5'. Each file contains all beam synchronous PVs for all beam pulses encountered during a specific acquisition cycle. That is, a file constitutes a logical table with potentially

~120 pulses/second * 60 seconds * 60 minutes = 432,000 rows, having

~2000 columns, each having PV data

432,000 x 2000 = 8.64 billion data valuesEach beam synchronous PV is represented as one HDF5 Dataset within the h5 file. Two extra datasets supply the timestamp of the data in sufficient precision to identify a single pulse; "secondsPastEpoch" and "nanoseconds". Hence the timing system's PID is not explicitly recorded.

The secondsPastEpoch is integer seconds since the same time base as POSIX time, midnight on 1st January 1970 in Greenwich. The nanoseconds entry represents whole nanoseconds past its same row secondsPastEpoch.

See Matlab help below for how to easily convert these timestamps to human readable local time.

NOTE: Some data files may be smaller than the potential size shown here; that is expected when the beam rate drops, since the data is only collected on timing beam events.

Data collection of BSA data started with this system on 19-Oct-2018.

In general, the system does an excellent job of recording every pulse, including at file boundaries. Note that there are a few gaps in the data though, such as times when we run at 0 rate, and at system commissioning we stopped and restarted a few times. We have left these data files in the collection.

As this project is deployed and improved, we add PVs and iron out system issues of the new technology being used. This log shows which PVs you can expect to be missing (or should we discover any to be wrong - none so far) in the early data sets recorded.

| Time Period | Issue and followup |

|---|---|

| 19-Oct-18 to ~ 17:00 22-Oct-18 PST | No PVs of TSE -1 were included. In practice this meant only BPMS and BLD data were included over that first weekend |

| 23-Oct-18 17:35 to 24-Oct-18 11:28 | Gap in data recording due to unrelated process filling tmp disk space on intermediate host |

| 22-Oct-18 to time of writing | Every signal that has no deadband, that is MDEL == 0, will have many values == NaN. That is, all data points where the PV doesn't change value, will have NaN after the time of data change, for all timestamped entries, until the time of next data change. Eg PICS in BSY. We expect to fix this by "backfill" very soon. |

| 22-Oct-18 to time of writing | No PV that updates > 120 Hz, eg Beam Loss Monitors are included in the data. |

Data access from Matlab is best described with some examples. Before starting Matlab, we copy an HDF file from one of the filesystem paths mentioned above. The following command will copy data acquired on October 22, 2018 to a directory named "bsd" in the home directory of the current user ("~"). The "bsd" directory must already exist. The '-C' option will compress the file during the transfer:

% scp -C <yourusername>@rhel6-64.slac.stanford.edu:/nfs/slac/g/bsd/BSAService/data/2018/10/22/CU_HXR_20181022_003549.h5 ~/bsdNow we can start Matlab and simply load all pulse data from the local HDF5 file. It will be presented as a simple collection of array values, each named after a PV:

>> load('~/bsd/CU_HXR_20181022_003549.h5','-mat');The load may take a few minutes!

To see which pulse synchronous process variables' data you have, simply use Matlab who

>> whoHaving loaded the data, convert seconds and nanoseconds past UTC base time (aka POSIX time), to local time:

>> ts=double(secondsPastEpoch)+double(nanoseconds)*1e-9; % Make vector of real seconds POSIX time

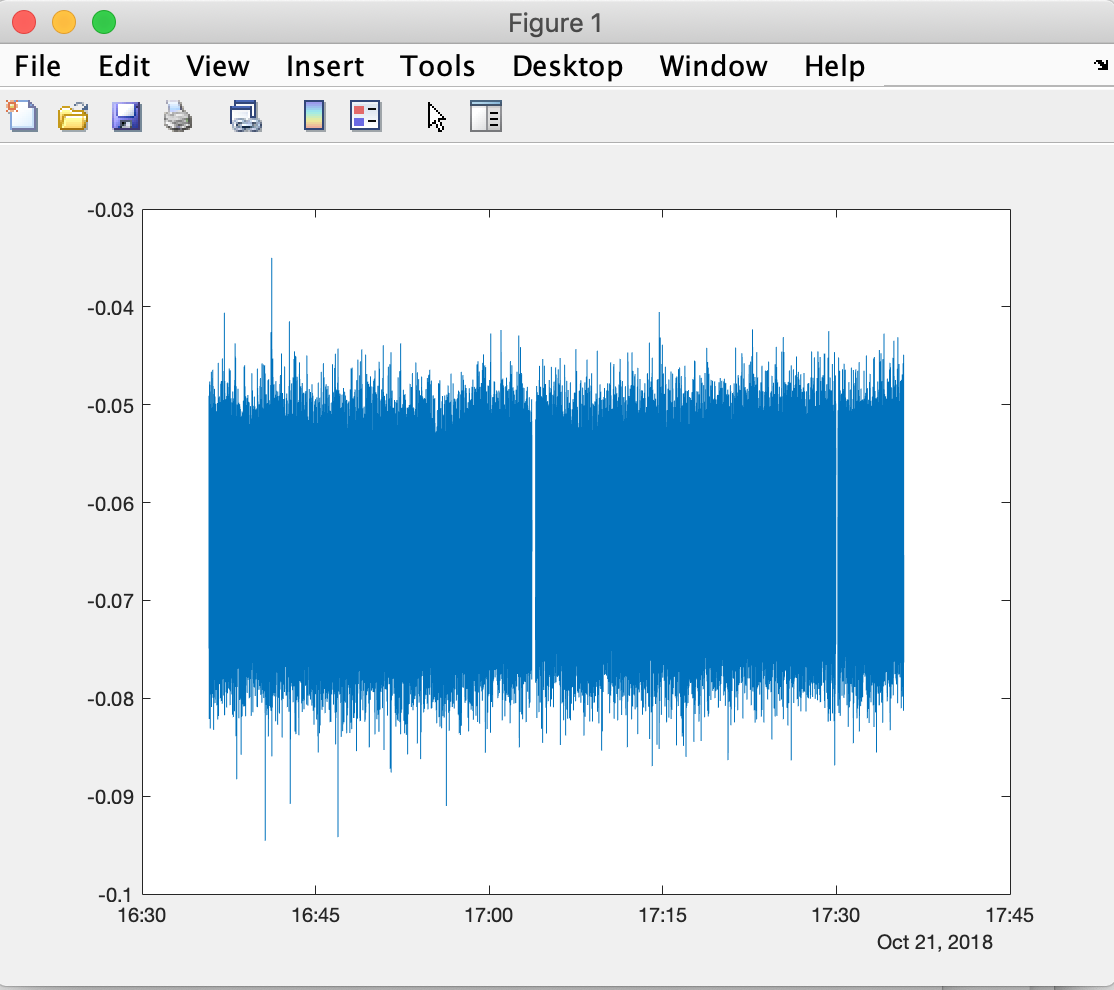

>> t=datetime(ts,'ConvertFrom','posixtime','TimeZone','America/Los_Angeles'); % Make vector of local timesPlot 1 PV data against local time (having loaded all above, and converted to local time per above):

>> plot(t,BPMS_IN20_525_X); % Plot value against local time

Prior to loading the data of a file, you can make a quick check the time range covered by a dataset file, without loading the whole file, by examining first and last value of the secondsPastEpoch variable within it.

>> tssecs=h5read('~/bsd/CU_HXR_20181022_003549.h5','/secondsPastEpoch');

>> datetime([tssecs(1);tssecs(end)],'ConvertFrom','posixtime','TimeZone','America/Los_Angeles')

ans =

2x1 datetime array

21-Oct-2018 16:35:47

21-Oct-2018 17:35:49Examine which PVs data are in the h5 data file, using h5info:

>> info=h5info('~/bsd/CU_HXR_20181022_013553.h5');

>> PVnames={info.Datasets.Name}Load just one PV variable's data from h5 data file, using h5read:

>> bpms_ltu1_910_y=h5read('~/bsd/CU_HXR_20181022_013553.h5','/BPMS_LTU1_910_Y');Load all data from h5 file as matlab "Table" (Matlab 2016 and above):

>> d0=struct2table(load('~/bsd/CU_HXR_20181022_003549.h5','-mat'));Having loaded as a Matlab Table, examine which Variables you have in the table:

>> d0.Properties.VariableNamesLook at the data of 1 variable

>> d0.BPMS_UND1_3395_XYou can extract data from a specific time for a specific set of PVs using the fetchbsaslice.m script (located at /afs/slac/g/lcls/tools/matlab/toolbox/). For example, to extract data between 8am and 9am pacific time on February 2nd, 2021 for all BPMs and klystrons:

>> d=fetch_bsa_slice({'2021-02-25 08:00:00','2021-02-25 09:00:00'},{'BPMS','KLYS'});To see the list of extracted PVs:

>> d.ROOT_NAMETo access the data matrix, rows in the same order as d.ROOT_NAME:

>> d.the_matrixFor more information on optional inputs to fetchbsaslice:

>> help fetch_bsa_sliceThe data files are presently owned by the user laci, in the bsd (Beam Synchronous Data) group, and are readable by everyone.

The Beam Synchronous Acquisition Service (BSAS) consists of two servers, called the Collector and FileWriter, which run continuously regardless of the accelerator operating state.

There are currently 4 Collector servers and 4 FileWriters running continuously to acquire and save BSAS data. An instance of the pair is deployed for the target beamlines on each LCLS accelerator. That is, there is an instance of Collector and FileWriter associated with each of the following operating scenarios:

| Accelerator | Target Beamline |

Abbreviated Reference |

|---|---|---|

| Normal Conducting | Hard X-ray Undulator path | CUHXR |

| Normal Conducting | Soft X-ray Undulator path | CUSXR |

| Superconducting | Hard X-ray Undulator path | SCHXR |

| Superconducting | Soft X-ray Undulator path | SCSXR |

The Abbreviated Reference is commonly used as a suffix in filenames, log file entries and elsewhere to indicate the source of data.

NOTE: The CU refers to the convention used in SLAC's accelerator design where the Non-superconducting accelerator is referred to as the Copper (CU) accelerator. Many other references specify NC and SC for normal conducting and superconducting.

The Collector runs on the production network, currently on the computer named lcls-daemon0, as an EPICS soft IOC writen in C++. All four instances currently run on the same computer.

The software collects data from several EPICS PVs, each of which is enabled by the presence of beam. These PVs are currently listed in a configuration file and as each Collector works, it builds tables of data having a column for each PV and a row associated with each arriving beam pulse.

The resulting table is itself represented as a PV

Each Collector supplies three PVs associated with its current acquisition process. For example, the Collector associated with the normal conducting linac, targeting the hard x-ray undulators will make the following PVs available as it works:

1. BSAS:SYS0:1:CUHXR_SIG - This contains an ascii list of all PVs currently being acquired.

2. BSAS:SYS0:1:CUHXR_STS - This contains a readable status indication of the current acquisition cycle

3. BSAS:SYS0:1:CUHXR_TBL - This contains all of the data in a table format, for the current acquisition cycleBecause the Collector produces its own PVs, they can be accessed using EPICS v7 tools such as pvget and eget. Currently, that software will only work if the following environment variables are set:

export EPICS_PVA_AUTO_ADDR_LIST=TRUE

export EPICS_PVA_ADDR_LIST="lcls-daemon0 $EPICS_PVA_ADDR_LIST"These will be set automatically at some point in time, but are currently required to be set manually.

The pvget program can be used to retrieve status information:

drm@mccas0 2803>pvget BSAS:SYS0:1:CUHXR_STS

BSAS:SYS0:1:CUHXR_STS 2022-05-05 15:23:01.034

PV connected #Event #Bytes #Discon #Error #OFlow

ACCL:IN20:300:L0A_ACUHBR true 10 1220 0 0 0

ACCL:IN20:300:L0A_PCUHBR true 10 1220 0 0 0

ACCL:IN20:300:L0A_SACUHBR true 12 1464 0 0 0

...NOTE: there are currently almost 1500 PVs being reported from this Collector, so the list will be long.

For the normal conducting linac, delivering beam to the hard x-ray undulator beamline, one can access these PVs:

| PV Name | Purpose | Detail |

|---|---|---|

| BSAS:SYS0:1:CUHXR_SIG | PV List | EPICS record containing an ascii list of names of the PVs being acquired. |

| BSAS:SYS0:1:CUHXR_STS | Status | EPICS record containing printable current acquisition status. Reset with each new acquisition cycle. |

| BSAS:SYS0:1:CUHXR_TBL | Acquired Data | EPICS record containing all data acquired so far in the current acquisition cycle from all acquired PVs. |

Similarly, for the other accelerator and beamline Collectors, PVs are generated.

For the normal conducting linac, delivering beam to the soft x-ray undulator beamline, one can access these PVs:

| PV Name | Purpose |

|---|---|

| BSAS:SYS0:1:CUSXR_SIG | PV List |

| BSAS:SYS0:1:CUSXR_STS | Status |

| BSAS:SYS0:1:CUSXR_TBL | Acquired Data |

For the superconducting linac, delivering beam to the hard x-ray undulator beamline, one can access these PVs:

| PV Name | Purpose |

|---|---|

| BSAS:SYS0:1:SCHXR_SIG | PV List |

| BSAS:SYS0:1:SCHXR_STS | Status |

| BSAS:SYS0:1:SCHXR_TBL | Acquired Data |

For the superconducting linac, delivering beam to the soft x-ray undulator beamline, one can access these PVs:

| PV Name | Purpose |

|---|---|

| BSAS:SYS0:1:SCSXR_SIG | PV List |

| BSAS:SYS0:1:SCSXR_STS | Status |

| BSAS:SYS0:1:SCSXR_TBL | Acquired Data |

NOTE: For these details, it is assumed the reader is familiar with basic elements of SLAC's production environment. Access to these files requires access to the

lacigroup account onlcls-daemon0.

The Collectors run as EPICS soft IOCs, currently on a single processor named lcls-daemon0.

| Soft IOC Name | Purpose |

|---|---|

| sioc-sys0-ba11 | acquisition of PVs in the Normal Conducting Linac, targeting the hard x-ray undulators |

| sioc-sys0-ba12 | acquisition of PVs in the Normal Conducting Linac, targeting the soft x-ray undulators |

| sioc-sys0-ba21 | acquisition of PVs in the Superconducting Linac, targeting the hard x-ray undulators |

| sioc-sys0-ba22 | acquisition of PVs in the Superconducting Linac, targeting the soft x-ray undulators |

The startup sequence and configuration information is maintained under the directory named by the IOC environment variable:

laci@lcls-daemon0 11:24 AM 585>echo $IOC

/usr/local/lcls/epics/iocCommon

laci@lcls-daemon0 11:24 AM 586>cd $IOC/sioc-sys0-ba11

laci@lcls-daemon0 11:24 AM 587>ls -l

lrwxrwxrwx 1 softegr lcls 31 Mar 18 10:15 iocSpecificRelease -> ../../iocTop/bsaService/current

-rwxrwxr-x 1 softegr lcls 361 Mar 9 13:00 startup.cmdThe two files in each of the soft IOC directories support a common, isolated environment common to all soft IOCs

| Filename | Purpose |

|---|---|

| iocSpecificRelease | Symbolic link to the current version of the soft IOC executables, configuration files and other EPICS-related files |

| startup.cmd | A startup script which provides a "wrapper" for the IOC environment |

The iocSpecificRelease directory contains the contents of the soft IOC as built with EPICS. The iocSpecificRelease entry is actually a symbolic link pointing to the current version of this IOC. Several previous version may exist, and the iocSpecificRelease actually points to the directory named current, which in turn points to the version currently being used.

The startup.cmd file sets an environment variable indicating the location of this soft IOC's directory area (pointed to by iocSpecificRelease) and provides itself as input to the executable IOC file within that current version. The script then executes commands within a common script located under /usr/local/lcls/epics/iocCommon/common/st.cmd.soft. This executes some other common scripts then finally refers back to the iocSpecificRelease directory, to run the IOC-specific st.cmd startup script.

As an example, the st.cmd file for the soft IOC sioc-sys0-ba11 mentioned above is located under

/usr/local/lcls/epics/iocCommon/sioc-sys0-ba11/iocSpecificRelease/iocBoot/lcls/sioc-sys0-ba11/st.cmdThe FileWriter software consists of the writing process itself and a monitoring program, both of which run on the DMZ subnet, on the computer named mccas0.

The FileWriter works as a multi-threaded standalone Python v3 program, and the FileWriterMonitor program is a simple shell script that runs periodically to ensure data is being recorded. All four instances of the FileWriter currently run on the same computer. The FileWriterMonitor script works as a single instance which checks all four FileWriters and the files they generate.

The FileWriter and FileWriterMonitor both reside under $PACKAGE_TOP/bsasStore/current (/afs/slac/g/lcls/package/bsasStore/current) which is a symbolic link to a directory holding the current versions.

The FileWriter python script can be found under /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriter

The FileWriterMonitor bash script can be found under /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor

The python scripts monitor an EPICS v7 PV, an NTTable structure. When starting, the software begins recording data in a scratch file in the /tmp directory, in a unique filename.

When the requested file size is met or the expected time duration expires, the scratch file data is copied to the data directory in a separate thread of execution, while new data is placed in a new scratch file.

All four instances of the FileWriter are run when the server (mccas0) starts. Four files contained in /etc/init.d are automatically run at boot time, or can be executed by a privileged user to stop, start or restart an instance of the FileWriter manually.

| Startup File | Purpose |

|---|---|

| /etc/init.d/st.bsasFileWriterCUHXR | Start a management script to record data from the normal conducting linac destined for the hard x-ray undulators |

| /etc/init.d/st.bsasFileWriterCUSXR | Start a management script to record data from the normal conducting linac destined for the soft x-ray undulators |

| /etc/init.d/st.bsasFileWriterSCHXR | Start a management script to record data from the superconducting linac destined for the hard x-ray undulators |

| /etc/init.d/st.bsasFileWriterSCSXR | Start a management script to record data from the superconducting linac destined for the soft x-ray undulators |

Each of these scripts run a corresponding management script which provides configuration details to the python-based FileWriters. These scripts also set environment variables to ensure proper recording of data for each FileWriter.

Three files are therefore used to start each FileWriter, as shown below. The Management Scripts and the Configuration Files are all located under /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriter

| Startup Script (/etc/init.d) |

Management Script | Configuration File | Purpose |

|---|---|---|---|

| st.bsasFileWriterCUHXR | bsas-CUHXR.conf | bsas-CUHXR.conf | Record data: Normal conducting linac, hard x-ray undulators |

| st.bsasFileWriterCUSXR | bsas-CUSXR.conf | bsas-CUSXR.conf | Record data: Normal conducting linac, soft x-ray undulators |

| st.bsasFileWriterSCHXR | bsas-SCHXR.conf | bsas-SCHXR.conf | Record data: Superconducting linac, hard x-ray undulators |

| st.bsasFileWriterSCSXR | bsas-SCSXR.conf | bsas-SCSXR.conf | Record data: Superconducting linac, soft x-ray undulators |

Each of the management scripts runs a common python 3 program called h5tablewriter.py, located in that same directory (/afs/slac/g/lcls/package/bsasStore/current/bsasFileWriter).

A single monitor script runs on mccas0 to ensure data is being recorded by all four FileWriter processes. The script resides in /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor, named bsamonitor.bash. There is also a UNIX Man page in that same directory, named bsamonitor.1 which can be viewed by typing the following command:

man /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor/bsamonitor.1The Monitor script is run every five minutes from a standard Linux cron daemon based on the following configuration entry in a crontab file:

mccas0 1,6,11,16,21,26,31,36,41,46,51,56 * * * * ( /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor/bsamonitor.bash -r ) > /tmp/bsamonitor.log 2>&1One can run the FileWriterMonitor software manually on mccas0 to check the status. The following command can be typed:

/afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor/bsamonitor.bash -hThe -h option prints a help message:

This program monitors and can restart one or more Beam Synchronous

Acquisition (BSA) Service file writer processes.

Usage: bsamonitor.bash [-r][-v][-h]

Without arguments, it silently checks the status of all file

writers and exits with an error code if all are not running or

there are problems with the data files.

Option:

-r: Restart the BSA file writer(s) associated with discovered problems

-v: Display extra messages while working

-h: Display this message

A log file is updated only when attempting to restart BSA file writers with the '-r' option.Note: the bsamonitor script will only work properly on the computer running the FileWriter software, currently mccas0.

The -r option will only take effect for users with sufficient system privilege.

The -v option is available to anybody, showing the status of the current BSAS data recording session.

Each Collector maintains a list of PVs from which data is acquired. As mentioned above, the list itself is contained within another PV as the Collector is running. The Collector reads those PV names from a file when it starts, and can re-read the file when a few PV names change, as it is running. It is recommended that the Collector be restarted if many PVs are being added or the entire PV list is being re-synchronized with the target beamline.

People with system privileges will be able to change the list and update the corresponding Collector.

The target beamline PV list files exist on lcls-daemon0 and can be modified by the users named laci or softegr, or members of the lcls group. Each soft IOC has a PV list file which is specific to that IOC, located within a directory having the following form:

/usr/local/lcls/epics/iocCommon/<IOC Name>/iocSpecificRelease/iocBoot/lcls/<IOC Name>For example, the directory:

/usr/local/lcls/epics/iocCommon/sioc-sys0-ba11/iocSpecificRelease/iocBoot/lcls/sioc-sys0-ba11/will contain a file with the list of PVs for sioc-sys0-ba11. Specifically, the file name is shown in the following table and for this example, can be found under:

/usr/local/lcls/epics/iocCommon/sioc-sys0-ba11/iocSpecificRelease/iocBoot/lcls/sioc-sys0-ba11/signals.cu-hxr.prod

Where to find the PV list files on lcls-daemon0:

| PV List File | Purpose |

|---|---|

| .../iocBoot/lcls/sioc-sys0-ba11/signals.cu-hxr.prod | List of PVs to be acquired from the normal conducting Linac, targeting the hard x-ray line |

| .../iocBoot/lcls/sioc-sys0-ba12/signals.cu-sxr.prod | List of PVs to be acquired from the normal conducting Linac, targeting the soft x-ray line |

| .../iocBoot/lcls/sioc-sys0-ba21/signals.sc-hxr.prod | List of PVs to be acquired from the superconducting Linac, targeting the hard x-ray line |

| .../iocBoot/lcls/sioc-sys0-ba22/signals.sc-sxr.prod | List of PVs to be acquired from the superconducting Linac, targeting the soft x-ray line |

The EPICS Directory Service can be used to populate the list of PVs from any of the target beamlines. These need to be performed on lcls-daemon0.

These steps need to be described in detail.

Generate: The eget command is used to find PV names in the target beamline. The easiest way to do so is to change to the directory that corresponds to the target beamline, as mentioned above. Before generating a new file, copy the existing one as a backup in case problems arise. When ready, use a command from the following table.

| Target Beamline | Command to Generate a New PV List |

|---|---|

| Find PVs in the normal conducting Linac, targeting the hard x-ray line | eget -ts ds -atag=LCLS.CUH.BSA.rootnames > signals.cu-hxr.prod |

| Find PVs in the normal conducting Linac, targeting the soft x-ray line | eget -ts ds -atag=LCLS.CUS.BSA.rootnames > signals.cu-sxr.prod |

| Find PVs in the superconducting Linac, targeting the hard x-ray line | eget -ts ds -atag=LCLS.SCH.BSA.rootnames > signals.sc-hxr.prod |

| Find PVs in the superconducting Linac, targeting the soft x-ray line | eget -ts ds -atag=LCLS.SCS.BSA.rootnames > signals.sc-sxr.prod |

Restart: To restart the Collector for the desired target beamline, use the command /etc/init.d/st.<IOC Name> restart. For example, when restarting the Collector for the normal conducting Linac targeting the hard x-ray line, one would type:

/etc/init.d/st.sioc-sys0-ba11 restartThe internal PV storing the list can be updated as the IOC is running, so it need not restart if only a few PVs are changing.

To add a new PV or change PVs on the list, one must

Once the appropriate file has been edited, one of the following commands can be used to make the list known to the corresponding Collector by changing its file list PV. Each command must be run from the corresponding directory.

The iocSpecificRelease directory contains the contents of the soft IOC as built with EPICS. The iocSpecificRelease entry is actually a symbolic link pointing to the current version of this IOC. Several previous version may exist, and the iocSpecificRelease actually points to the directory named current, which in turn points to the version currently being used.

The startup.cmd file sets an environment variable indicating the location of this soft IOC's directory area (pointed to by iocSpecificRelease) and provides itself as input to the executable IOC file within that current version. The script then executes commands within a common script located under /usr/local/lcls/epics/iocCommon/common/st.cmd.soft. This executes some other common scripts then finally refers back to the iocSpecificRelease directory, to run the IOC-specific st.cmd startup script.

As an example, the st.cmd file for the soft IOC sioc-sys0-ba11 mentioned above is located under

/usr/local/lcls/epics/iocCommon/sioc-sys0-ba11/iocSpecificRelease/iocBoot/lcls/sioc-sys0-ba11/st.cmdThe FileWriter software consists of the writing process itself and a monitoring program, both of which run on the DMZ subnet, on the computer named mccas0.

The FileWriter works as a multi-threaded standalone Python v3 program, and the FileWriterMonitor program is a simple shell script that runs periodically to ensure data is being recorded. All four instances of the FileWriter currently run on the same computer. The FileWriterMonitor script works as a single instance which checks all four FileWriters and the files they generate.

The FileWriter and FileWriterMonitor both reside under $PACKAGE_TOP/bsasStore/current (/afs/slac/g/lcls/package/bsasStore/current) which is a symbolic link to a directory holding the current versions.

The FileWriter python script can be found under /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriter

The FileWriterMonitor bash script can be found under /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor

The python scripts monitor an EPICS v7 PV, an NTTable structure. When starting, the software begins recording data in a scratch file in the /tmp directory, in a unique filename.

When the requested file size is met or the expected time duration expires, the scratch file data is copied to the data directory in a separate thread of execution, while new data is placed in a new scratch file.

All four instances of the FileWriter are run when the server (mccas0) starts. Four files contained in /etc/init.d are automatically run at boot time, or can be executed by a privileged user to stop, start or restart an instance of the FileWriter manually.

| Startup File | Purpose |

|---|---|

| /etc/init.d/st.bsasFileWriterCUHXR | Start a management script to record data from the normal conducting linac destined for the hard x-ray undulators |

| /etc/init.d/st.bsasFileWriterCUSXR | Start a management script to record data from the normal conducting linac destined for the soft x-ray undulators |

| /etc/init.d/st.bsasFileWriterSCHXR | Start a management script to record data from the superconducting linac destined for the hard x-ray undulators |

| /etc/init.d/st.bsasFileWriterSCSXR | Start a management script to record data from the superconducting linac destined for the soft x-ray undulators |

Each of these scripts run a corresponding management script which provides configuration details to the python-based FileWriters. These scripts also set environment variables to ensure proper recording of data for each FileWriter.

Three files are therefore used to start each FileWriter, as shown below. The Management Scripts and the Configuration Files are all located under /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriter

| Startup Script (/etc/init.d) |

Management Script | Configuration File | Purpose |

|---|---|---|---|

| st.bsasFileWriterCUHXR | bsas-CUHXR.conf | bsas-CUHXR.conf | Record data: Normal conducting linac, hard x-ray undulators |

| st.bsasFileWriterCUSXR | bsas-CUSXR.conf | bsas-CUSXR.conf | Record data: Normal conducting linac, soft x-ray undulators |

| st.bsasFileWriterSCHXR | bsas-SCHXR.conf | bsas-SCHXR.conf | Record data: Superconducting linac, hard x-ray undulators |

| st.bsasFileWriterSCSXR | bsas-SCSXR.conf | bsas-SCSXR.conf | Record data: Superconducting linac, soft x-ray undulators |

Each of the management scripts runs a common python 3 program called h5tablewriter.py, located in that same directory (/afs/slac/g/lcls/package/bsasStore/current/bsasFileWriter).

A single monitor script runs to ensure data is being recorded by all four FileWriter processes. The script resides in /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor, named bsamonitor.bash. There is also a UNIX Man page in that same directory, named bsamonitor.1 which can be viewed by typing the following command:

man /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor/bsamonitor.1The Monitor script is run every five minutes from a standard Linux cron daemon based on the following configuration entry in a crontab file:

mccas0 1,6,11,16,21,26,31,36,41,46,51,56 * * * * ( /afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor/bsamonitor.bash -r ) > /tmp/bsamonitor.log 2>&1One can run the FileWriterMonitor software manually to check the status. The following command can be typed:

/afs/slac/g/lcls/package/bsasStore/current/bsasFileWriterMonitor/bsamonitor.bash -hThe -h option prints a help message:

This program monitors and can restart one or more Beam Synchronous

Acquisition (BSA) Service file writer processes.

Usage: bsamonitor.bash [-r][-v][-h]

Without arguments, it silently checks the status of all file

writers and exits with an error code if all are not running or

there are problems with the data files.

Option:

-r: Restart the BSA file writer(s) associated with discovered problems

-v: Display extra messages while working

-h: Display this message

A log file is updated only when attempting to restart BSA file writers with the '-r' option.The -r option will only take effect for users with sufficient system privilege.

The -v option is available to anybody, showing the status of the current BSAS data recording session.

Each Collector maintains a list of PVs from which data is acquired. As mentioned above, the list itself is contained within another PV as the Collector is running. People with system privileges will be able to change the list and update the corresponding Collector.

When a Collector starts, it reads the PV list from a file. The internal PV storing the list can be updated as the IOC is running, so it need not restart when PVs change. To add a new PV or change PVs on the list, one must

The PV list files exist on lcls-daemon0 and can be modified by the users named laci or softegr, or members of the lcls group. Each soft IOC has a PV list file which is specific to that IOC, located within a directory having the following form:

/usr/local/lcls/epics/iocCommon/<IOC Name>/iocSpecificRelease/iocBoot/lcls/For example:

/usr/local/lcls/epics/iocCommon/sioc-sys0-ba11/iocSpecificRelease/iocBoot/lcls/will contain a file with the list of PVs for sioc-sys0-ba11.

| PV List File | Purpose |

|---|---|

| sioc-sys0-ba11/signals.cu-hxr.prod | List of PVs to be acquired from the normal conducting Linac, targeting the hard x-ray line |

| sioc-sys0-ba12/signals.cu-sxr.prod | List of PVs to be acquired from the normal conducting Linac, targeting the soft x-ray line |

| sioc-sys0-ba21/signals.sc-hxr.prod | List of PVs to be acquired from the superconducting Linac, targeting the hard x-ray line |

| sioc-sys0-ba22/signals.sc-sxr.prod | List of PVs to be acquired from the superconducting Linac, targeting the soft x-ray line |

Once the appropriate file has been edited, one of the following commands can be used to make the list known to the corresponding Collector by changing its file list PV. Each command must be run from the corresponding directory.

| Command to Update the PV List | Purpose |

|---|---|

| cat signals.cu-hxr.prod | xargs pvput BSAS:SYS0:1:CUHXR_SIG X | Update the PVs to be acquired from the normal conducting Linac, targeting the hard x-ray line |

| cat signals.cu-sxr.prod | xargs pvput BSAS:SYS0:1:CUHXR_SIG X | Update the PVs to be acquired from the normal conducting Linac, targeting the soft x-ray line |

| cat signals.sc-hxr.prod | xargs pvput BSAS:SYS0:1:CUHXR_SIG X | Update the PVs to be acquired from the superconducting Linac, targeting the hard x-ray line |

| cat signals.sc-sxr.prod | xargs pvput BSAS:SYS0:1:CUHXR_SIG X | Update the PVs to be acquired from the superconducting Linac, targeting the soft x-ray line |

Last modified: Mon Sep 26 10:47:21 PDT 2022