RCE / HSIO Distribution for ATLAS Applications (Mar/2015)

General Information

The generic high bandwidth DAQ development with RCE concept on ATCA is a well

known development platform for ATLAS uprgade, and the concept is also adopted by many other experiments

(LCLS,

LSST, LBNE,

HPS...)

for a broad user community, which allows everyone to benefit from

common support and utilities developed in

any project. The first generation of RCEs in combination with HSIO served the ATLAS pixel

IBL readout for stave/ystem/installation tests, pixel upgrade test stands and test beam setups. The muon

CSC readout replacement

commissioined during LS1 is the first ATCA deployment

in ATLAS, with a design based on the RCE concept.

The current generation (Gen-III) of RCEs is base on the System on Chip (SoC) technology offered by the XILINX

ZYNQ-7000 series,

which is a natural platform to support the processor-centric RCE concept. The CSC readout replacement

and the Mar/2015 joint production for ATLAS upgrade projects are based on this version. The latest updates

on the Gen-III development can be found from the

Jan/2015 RCE training workshops at CERN/UK, while the

CSC readout PRR shows a full scale application example.

The full bandwidth offered by the whole COB (Cluster On Board - the main ATCA motherboard) is a significant

overkill for most teststand needs, so that simpler test stand needs at the level of 1-2 GBT links or moderate

number of slow links (such as one IBL stave) can also consider the upgraded

HSIO-II combined with an RCE mezzanine card

as a compact standalone bench-top DAQ system. For

those indeed interested to explore large system issues for many GBT like links and large volume data transfers

between ATCA boards, then the COB is a powerful platform to enable that. While there is already plenty to explore

in what's provided by the COB and the standard mezzanine cards, a major motivation behind the mezzanine structure is

to allow easy future upgrades and more importantly to facilitate the development of your own ideas e.g. GPU on

a new type of mezzanine, to forge fast progress by taking advantage of the COB/RCE infrastructure already provided.

Hardware Components

The versatility built into the new generation of RCE/COB infrastructure also means that there are options to

consider in how to configure a test system to best suite your own needs. We will discuss the various components

of the hardware setup so that you can determine the configration of your test stand.

- ATCA test stand infrastructure

This includes the ATCA shelf (crate), server computer and local netowrk setup. The dedicated

Test Stand Infrastructure page will guide you to the choices. You will

need to carry out your own purchase for the hardware (note: ATCA shelf purchase may have long lead time)

while detailed instructions used for existing test stands will be updated to assist the actual setup.

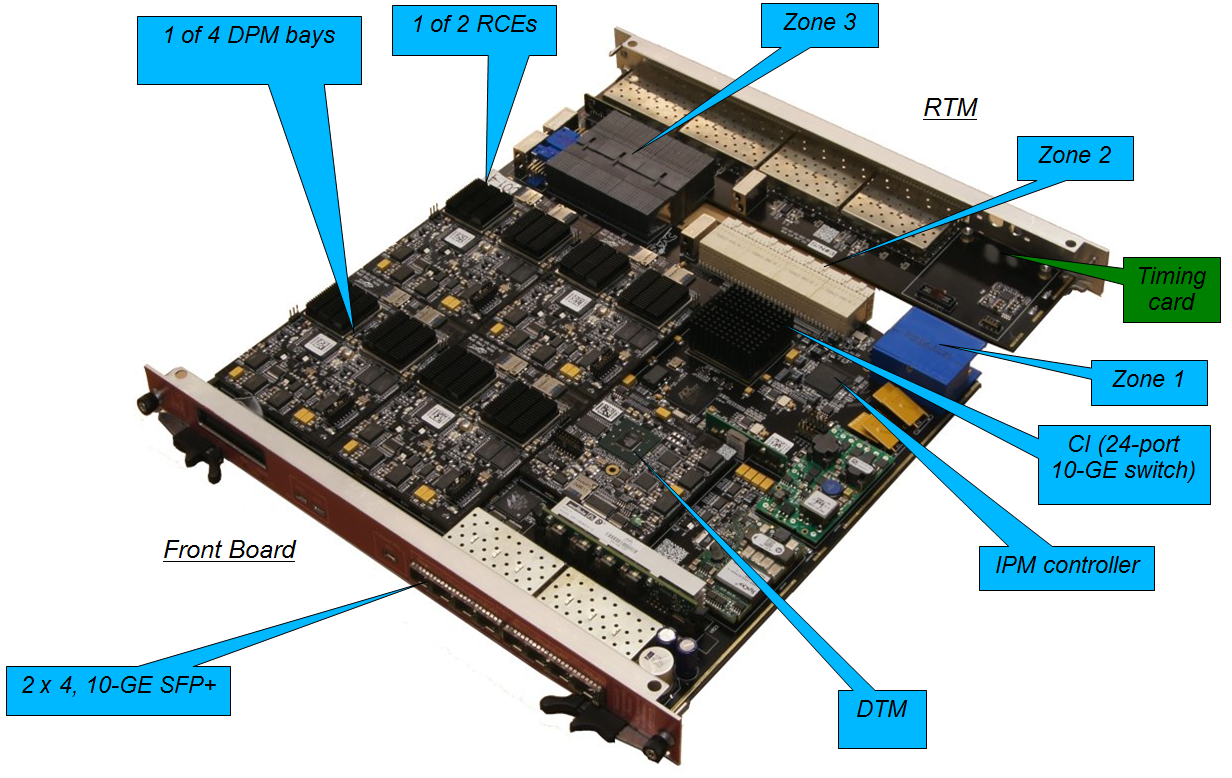

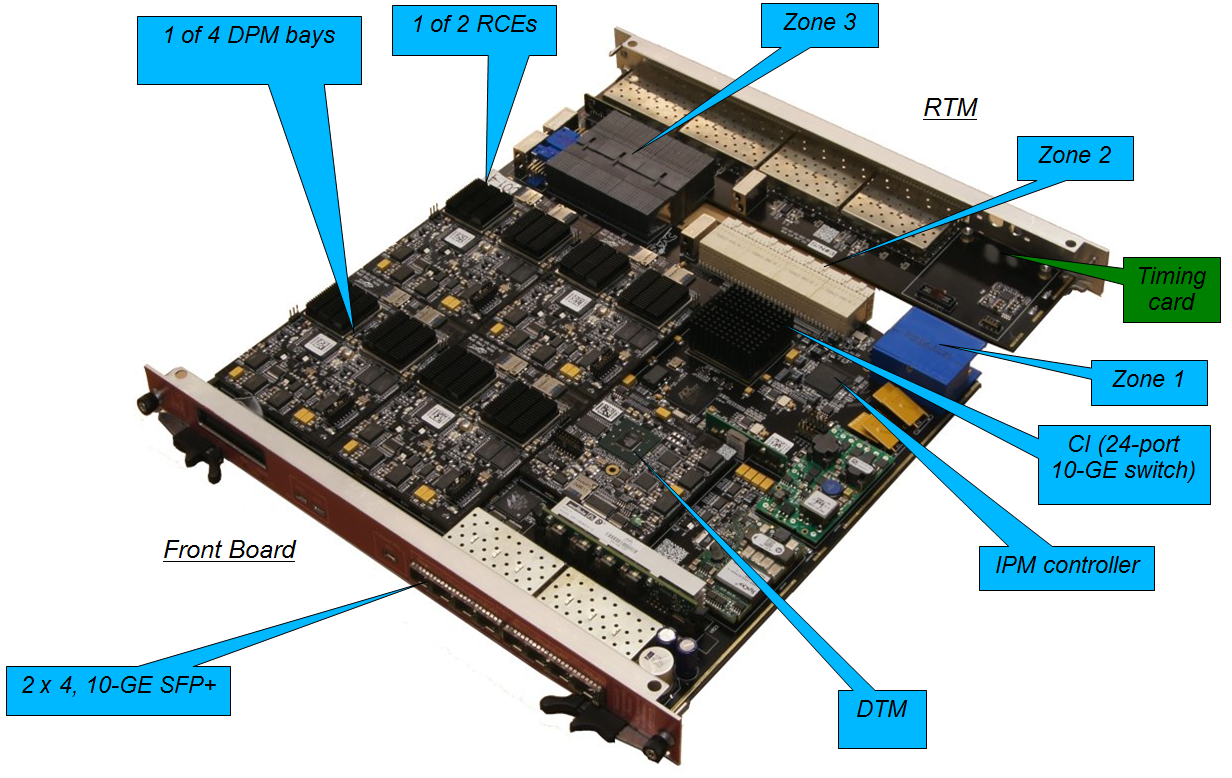

- COB

The COB (Cluster on Board) is the motherboard/carrier ATCA board. This provides the

infrastructure for powering with IPM Interface for control and monitoring. It hosts the connections to ATCA

backplane and user I/O through P3 connector, as well as the front panel displays and Ethernet ports. The embedded

Fulcrum network switch ASIC is also directly mounted on the COB PCB.

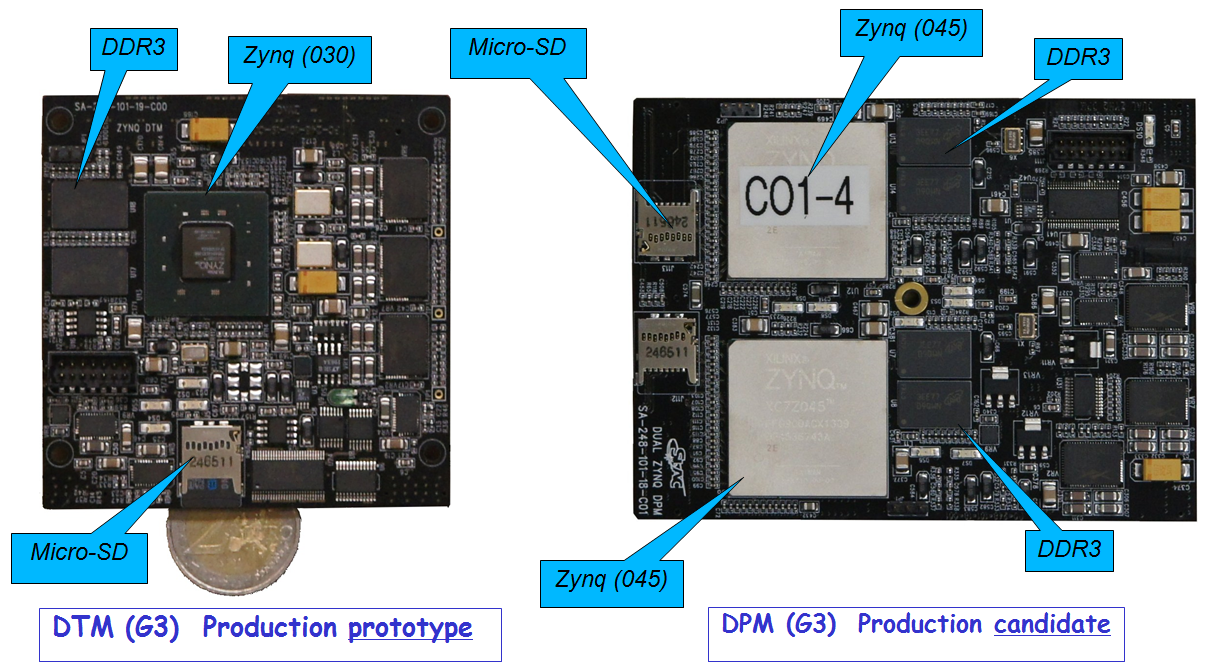

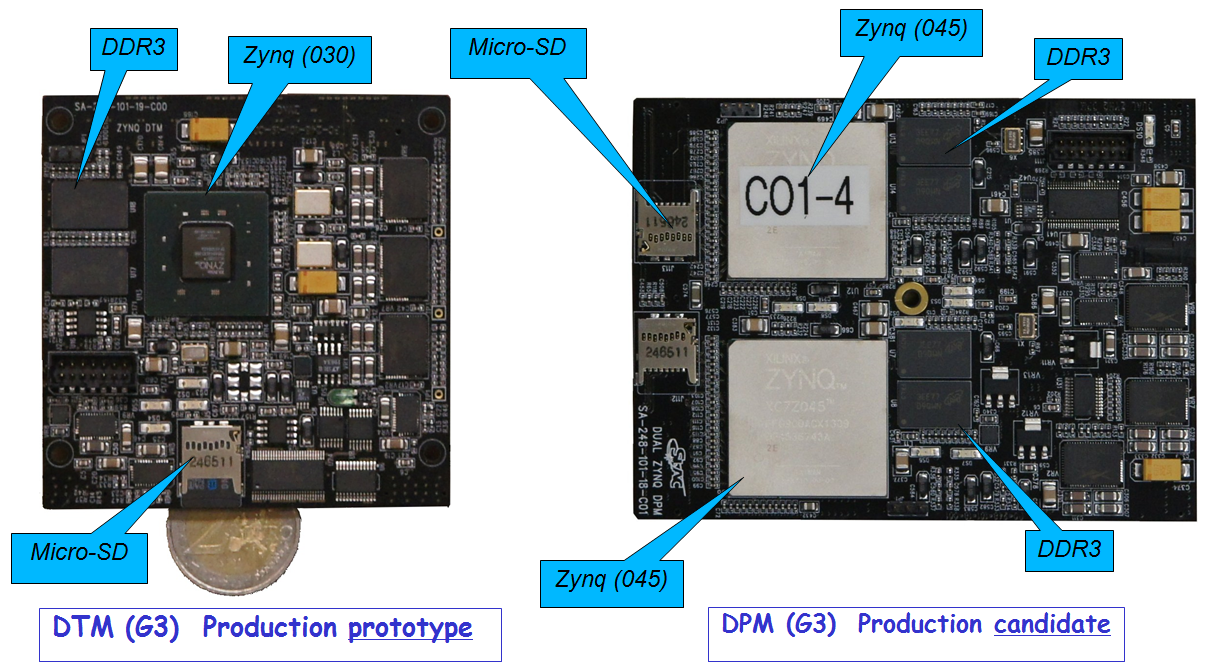

- DPM

The DPM (Data Processing Module) is the main work horse mezzanine card hosting the processing

RCEs with two RCEs per DPM. Each of the proessing RCEs is based on a XILINX ZYNQ XC7Z045-2FFG900E with dual

Cortex-A9 ARMS processors and ~7 times more gates logic than a Virtex-5. Up to 12 MGTs can be used for user I/O,

but one should not count on processing more than 10Gb/s input per RCE initially. There are four DPM bays per COB,

but it is rather unlikely one needs all four bays populated for a typical test stand setup. One could consider to

e.g. populate only one or two DPM bays, which would already allow testing communications between RCEs. Each RCE is

essentially an independent DAQ agent so that multiple users can potentially share the same COB (may be safer if on

separate DPMs).

- DTM

The DTM (Data Tranportation Module) is a mezzanine card (one per COB) that manages the network

traffic going through the embedded Fulcrum switch. The DTM core is a single RCE based on ZYNQ XC7Z030-2FBG484E

(~2x Virtex-5 in gates logic resources). The DTM is also the TTC interface hub connected to the physical

interface on the RTM and responsible for distributing the TTC signal to the DPM RCEs and collecting the Busy

signals back to the TTC system. The DTM can also be a local TTC generator to allow the COB operating stanalone with

built in functionalities that's in the LTPs in the present systems. In particuar, the 1GB RCE memory becomes a

playback source for programmable arbitrary trigger sequences for testing. In all setups, one needs exactly one

DTM per COB. In the upgraded HSIO test stand, a DTM is used as the RCE mezzanine.

The DTM on a COB runs Arch Linux as its operating system.

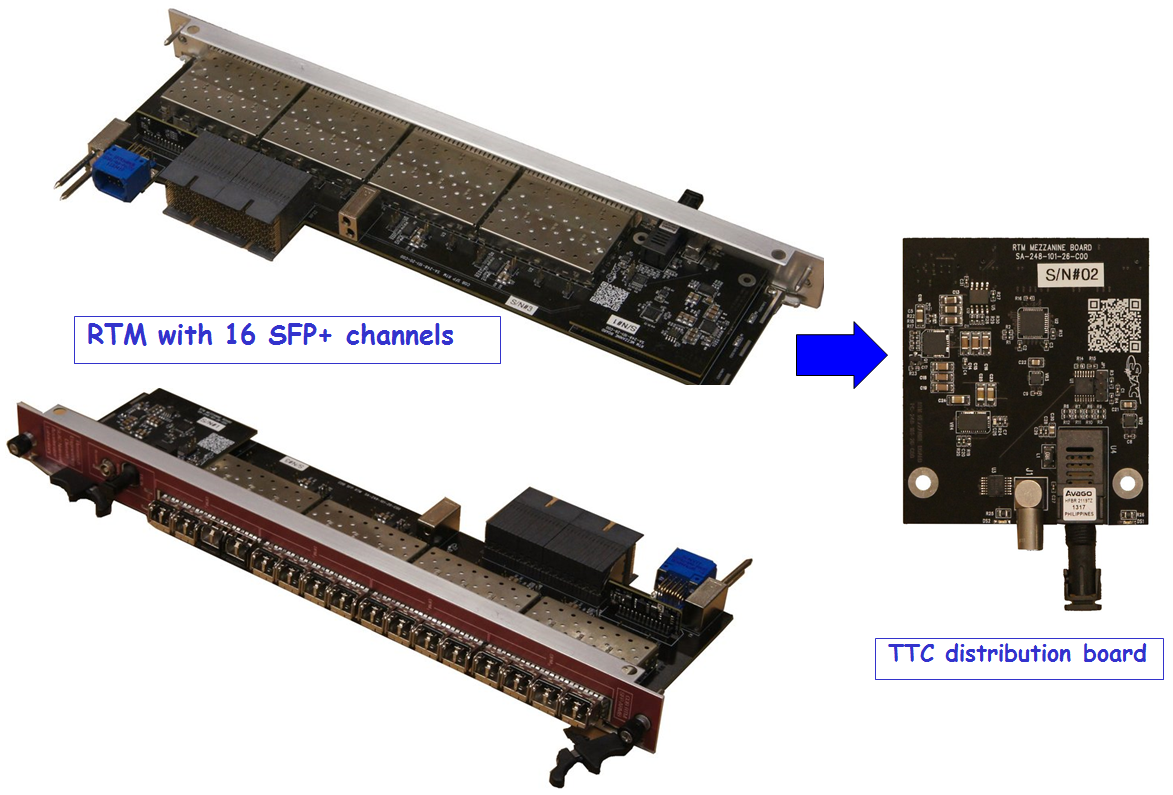

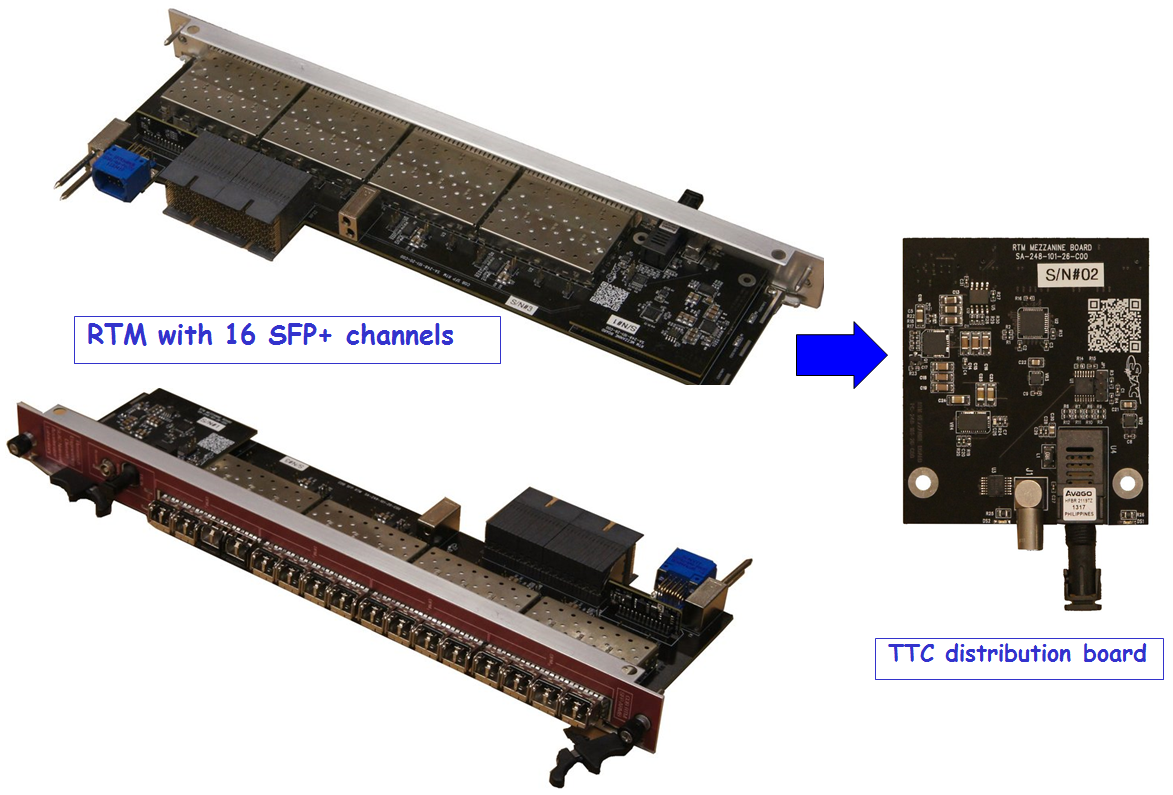

- RTM

The RTM (Rear Transition Module) at the back of the COB connecting via the P3 connector is

mostly a connectivity board with almost no active components besides the transceivers interfacing to the variety

of I/O connections. This allows one to design different RTMs to better match different application use cases,

with limited effort in carrying other generic functionalities. In the case of higher quality drivers needed for

long fibers or multi-channel packs, the transceiver cost is likely to dominate. Two types of RTMs developed so

far can perhaps be considered standard:

- 16xSFP: with 16 single channel SFP transceiver cages, two channels connected to each RCE. This is ready for distribution for this round.

- 96-ch MPO: with 8+8 TX+RX MPO feed-through connectors linked to miniPODs as transceivers. Each MPO is

12-channels and each RCE is connected to a pair of MPOs for TX+RX with 12 channels each.

New version with 24 channel MPO connectors are also under development. Given

the potential variations here and mostly only relevant for large scale

production DAQ systems, this distribution will not offer this type of RTM.

For other needs not quite compatible with either version of RTM, we would like to identify additional cases

with potential broad interests for additional standard designs, or work with you to design your own RTM. In any

case, the RTM distribution will not include transceivers given the

unknown multiplicity and grades you might need and it would be quite wasteful

to populate all transceivers for most cases. For use cases where signals are

relatively slow (<500 Mb/s) and non-optical, it may be worth considering the

HSIO option which can accommodate many different types of I/O connections to

readily aggregate up to 80 slow input links into 3.1 Gbps streams

(can go up to 6 Gb/s if needed) using the

RCE native PGP protocol to process on the DTM RCE, if the total bandwidth can

fit within two 6 Gb/s streams. This solution was used for IBL stave test readout.

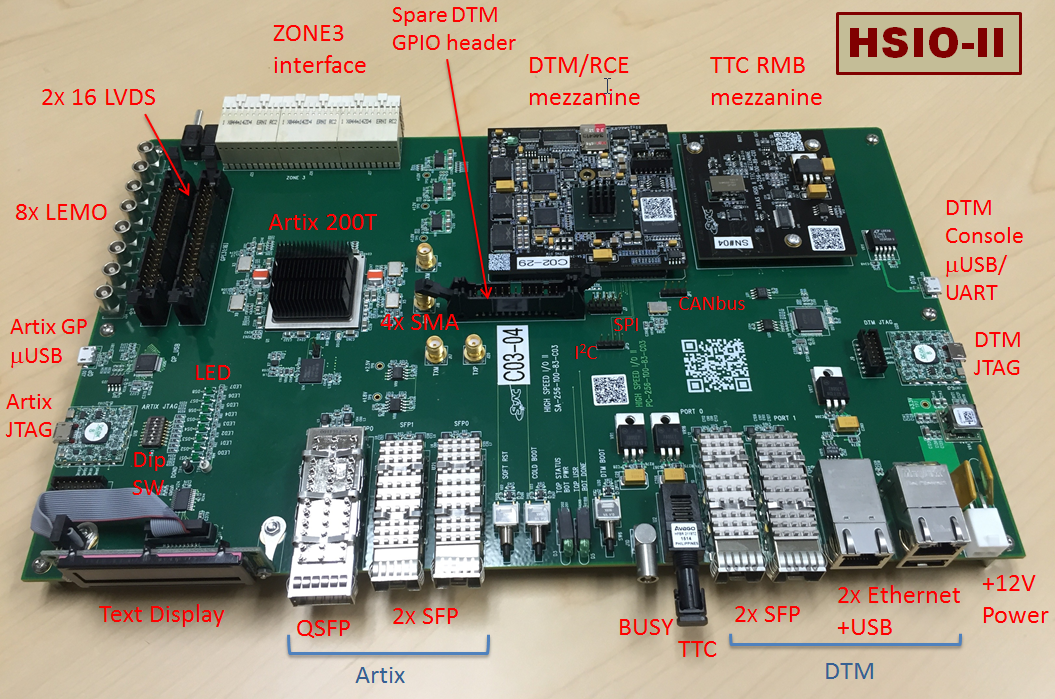

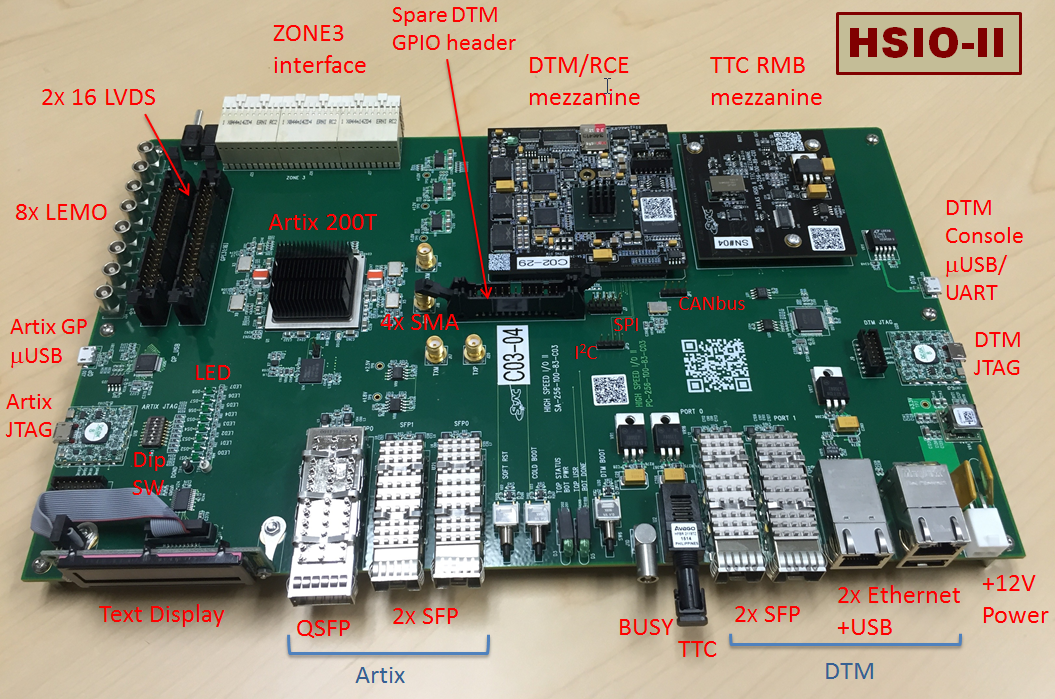

- HSIO-II

The existing HSIO board is a standalone benchtop DAQ board with many common

I/O connection interfaces and a Virtex-4 FPGA. It is widely used in silicon

strip stave upgrade and pixel test setups to facilitate the variety of I/O

connections. The HSIO-II upgrade combines both the I/O versatility and the RCE

programmability for a self-contained compact bench-top DAQ system. The status the

HSIO-II upgrade is discussed in a

talk at the Feb/2015 ITK week.

The HSIO-II is consistently aiming for ~6 Gb/s MGT links in this version which

would be adequate to work with GBT, while higher speed version for the future

is expected. Dave

Nelson's HSIO-II documentation page contains user manual, revision

details, block diagrams, schematics and PCB layout models. The DTM mezzanine can either run

Arch Linux or RTEMS Real Time kernel. Although the HSIO

main board provides many I/O connectors, a more common usage is through a

dedicated interface board connecting to HSIO P3 connector. Two common HSIO interface

boards:

- Si-strip Interface Board: this remains unchanged

since 2011, as a standard Si-strip upgrade stave testing setup.

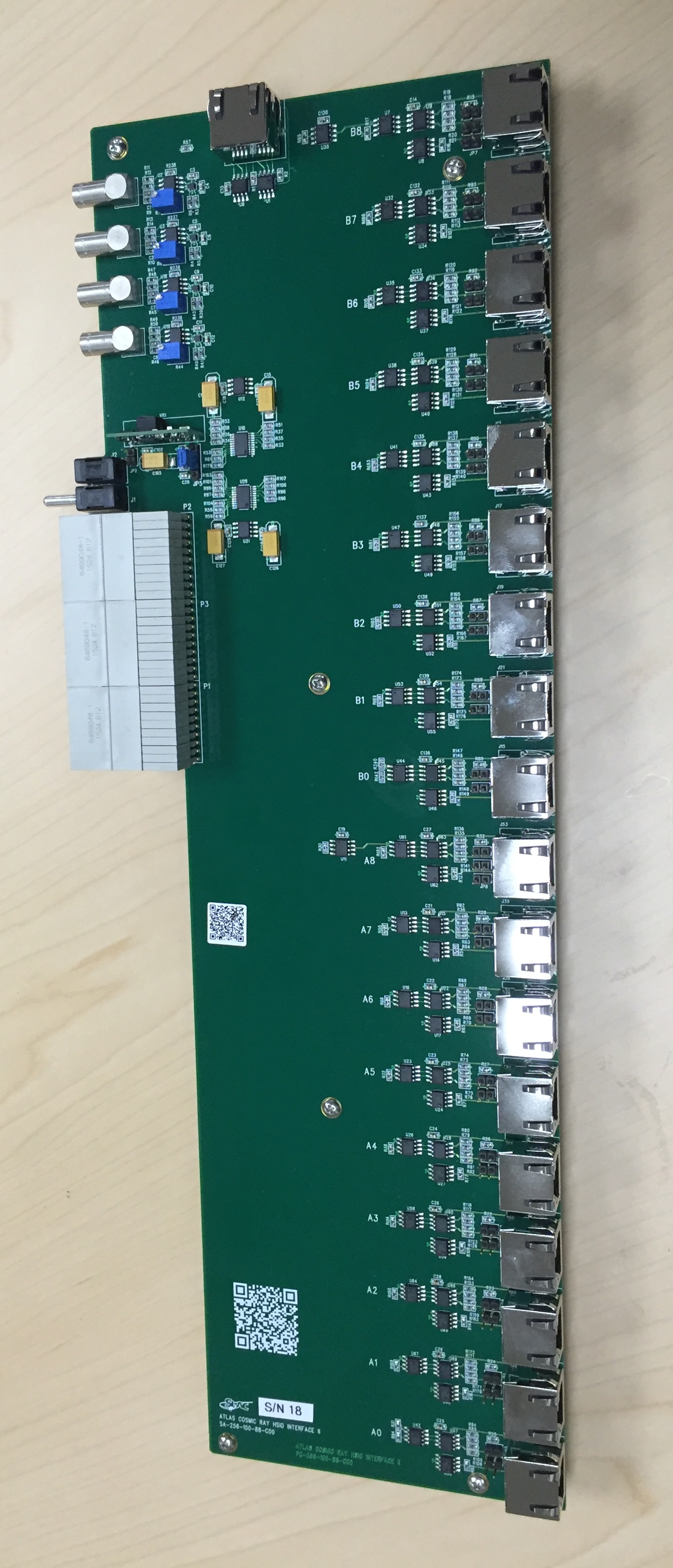

- Pixel Interface Board: this is also commonly known as

pixel cosmic telescope interface with RJ45 connectors to test modules. The

existing version also has various LV supply sockets built in, but the new

version for distribution is a pure readout interface for 18 RJ45 readout

ports and with LV supply sockets removed.

Other variations of interface boards are also under consideration and some feedback on common

interests would be useful to identify such options. The distribution will not include any transceivers

for the high speed links given the potentially different usage.

The HSIO-II main board can be powered by a typical 12V AC/DC laptop supply

(also not supplied given the variance for different countries) e.g.

CUI Inc. ETSA120330U-P5P-SZ, Digi-Key T1197-P5P-ND for $17.44 each, for typical moderate

usage, while heavy usage with most high speed links all active could consider the

higher current capacity model.

The narrow round plug on the supply that normally would plug into a laptop needs to be cut off and replaced

by a simple 12V Molex connector for the HSIO supply.

Pictures

Click on the pictures for enlarged details.

|

|

|

|

|

| COB + RTM |

DTM and DPM |

RTM with 16x SFP+ |

HSIO-II |

Pixel Interface |

Requests for Joining Production Distribution

The RCE Gen-III COB hardware has already gone through two batches of production runs in 2014-2015

and exercised through the new CSC readout with no known issues. Requests will be

collected for each forthcoming batch prior to the production to define the production volume. These requests are likely to be

combined with requests from other experiments so that the large volume production can reduce the unit cost for

everyone. Because the component price may vary with time and shared cost depends on the expected production volume

of each batch, the estimated unit cost will vary from batch to batch. The Mar/2015 production batch component costs

is as below.

| Item | Unit Price |

|---|

| COB motherboard without DPM/DTM mezzanines | $6200 |

| DPM dual-RCE mezzanine | $3700 |

| DTM mezzanine | $1200 |

| RTM (16xSFP) with TTC mezzanine, but without transceivers | $1800 |

| HSIO-II main board with TTC mezzanine, but without DTM, without transceivers | $3500 |

| HSIO Pixel interface board with 18 RJ45 I/O port | $1500 |

| HSIO Si-strip stave interface board | $900 |

Please fill in the RCE/HSIO request form for the request quantities, fund

contribution channel and delivery info and send to Su Dong

by Mar/16/2015.

Apologies for the rather short time for collecting requests as many people have been

waiting for this production for quite some time and we are trying to get the production going asap. The excel

form also contains two additional tabs for examples of minimal COB or HSIO test stand components. The distribution

only covers the items above, while you should procure the test infrastructure such as ATCA shelf in advance

according to the separate Test Stand Infrastructure page.

You also need to procure the transceivers and power supplies yourself for your specific test stand needs. Majority

of components are aiminged to deliver by end of early May, while in the case of higher demand on the DPMs that

requires purchasing new ZYNQ Z045 which long lead time, there may be delays in delivery for some of the DPMs.

For requests from US collaborators, the fund contribution will be managed by US

ATLAS internally. For international ATLAS requests, the fund contributions will

be collected by US ATLAS through CERN account transfers. An MOU will be drafted

between each institution and SLAC+BNL before fund collection. The fund transfers

will be requested before the producion to enable the production. The exchange

rate to convert the quote US$ amount to CHF will be according the

CERN CET exchange rate

at the time of fund transfer. The delivery of

completed boards can be direct shipped to your instituation (which usually

inovlves import tax to the receipient), or to pick up at CERN as we expect

a group of them will be shipped to CERN together.

Some useful links:

Su Dong